When developing AI models for forecasting, data is the single most important factor impacting the accuracy of the predictive model.

Many of our users have valuable data at their fingertips, often in Excel, but may not have experience preparing data for AI modeling. In this article, we’ll share five tips and best practices for preparing your data for forecasting tasks like predicting new product sales or customer retention.

Forecasting is the process of using historical and other data to predict future outcomes. Typically, the most successful forecasting models use as much data as possible to achieve the highest accuracy. Powered by deep learning, the OneClick.ai platform allows you to incorporate both unstructured and structured data into the model, which wasn’t previously possible without long and expensive AI development cycles.

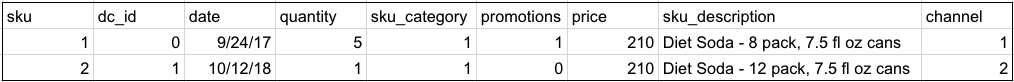

And when historical data isn’t available, like in the case of new product introductions, the platform leans on meta-information like product description, size, and color to find hidden correlations and make projections.

1. Use as much historical data as possible.

We recommend at least three years of historical data to predict one to three months of future sales. Our platform can process massive data sets to build and train models, and in fact deep learning algorithms thrive on larger data sets to discover correlations.

2. Include the most detailed data you have available, regardless of your forecasting window.

The more detailed the data, the better the deep learning model. Even if your prediction window is monthly, it is better to provide daily historical sales numbers if you have them. Same goes for location data like county, state, city, region, metro area, and country, and categorical information. This helps the model learn relationships between different features that may not be apparent to a human analyst.

3. Make sure the data you’re providing can tell the whole story.

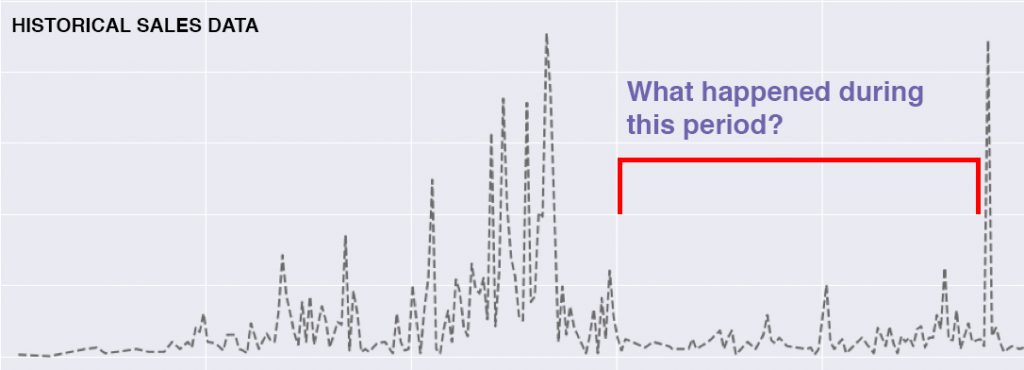

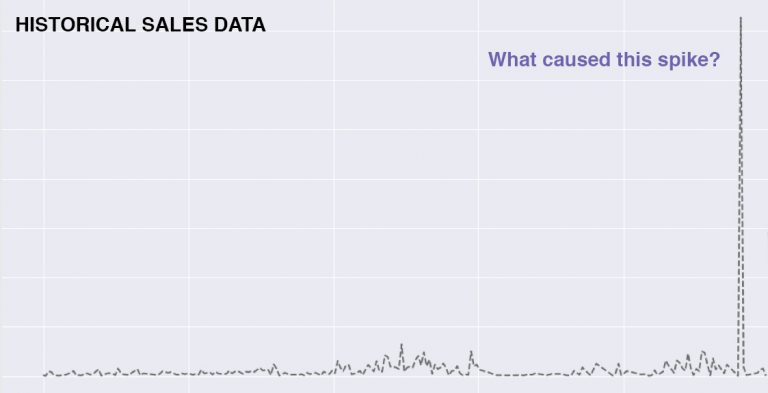

If there are irregularities in the data, review them prior to import in case there are critical factors that impacted the historical sales but are not represented in the data being used for modeling and training. In graph 1, a promotion drove a major spike in sales, yet the promotional information wasn’t initially included in the data. In graph 2, a competitor launched a similar product at a lower price point, then a marketing push drove a sudden spike. These blind spots can be avoided by having an analyst review the data prior to import for anomalies.

Graph 1

Graph 2

4. Building a Retail or CPG Forecast? Include product meta-information for new product forecasts to improve accuracy.

New product launches are a critical part of most manufacturing and retail businesses. But what happens when you don’t have any historical sales data to use in the forecast model? Meta-information like product description, product category, package size, price, and geographic data provide hints when the AI is looking for correlations. OneClick.ai was designed from the beginning to incorporate all types of data – even unstructured data like product images and descriptions—which leads to greater accuracy.

5. Include external datasets.

While it may seem obvious, including external data like weather, location with coordinates, oil prices and special events like music festivals, conferences, and exhibitions are critical to increasing the accuracy of your forecast. An unusually warm winter will likely negatively impact the sales of down jackets. A 2-day summer music festival drives a spike in sales of sunscreen and water at a grocery store next to the festival entrance. Without this meta-information, the AI wouldn’t see the contributing factors and doesn’t have context for what is truly impacting sales.

Additional resources:

- Learn more by reading our OneClick.ai Tutorial: Formatting Data for AI Training and Modeling

- For a complete guide to getting started using OneClick.ai please reference the OneClick.ai Walkthrough

- If you have additional questions about preparing your data for modeling on the OneClick.ai platform please contact support@oneclick.ai